Advanced RAG Trade-offs

Abstract

Classic RAG addresses contextual search in small, homogeneous knowledge bases. Advanced RAG extends this model to production scale: it adds hybrid search, multi-layer filtering, prompt management, and protective mechanisms. Github example.

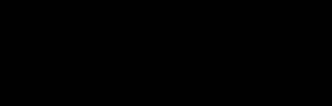

A special role belongs to Sovereign AI – deploying a language model entirely within an organization's own infrastructure, including air-gapped environments with no internet access. This approach is mandatory wherever data cannot leave the security perimeter.

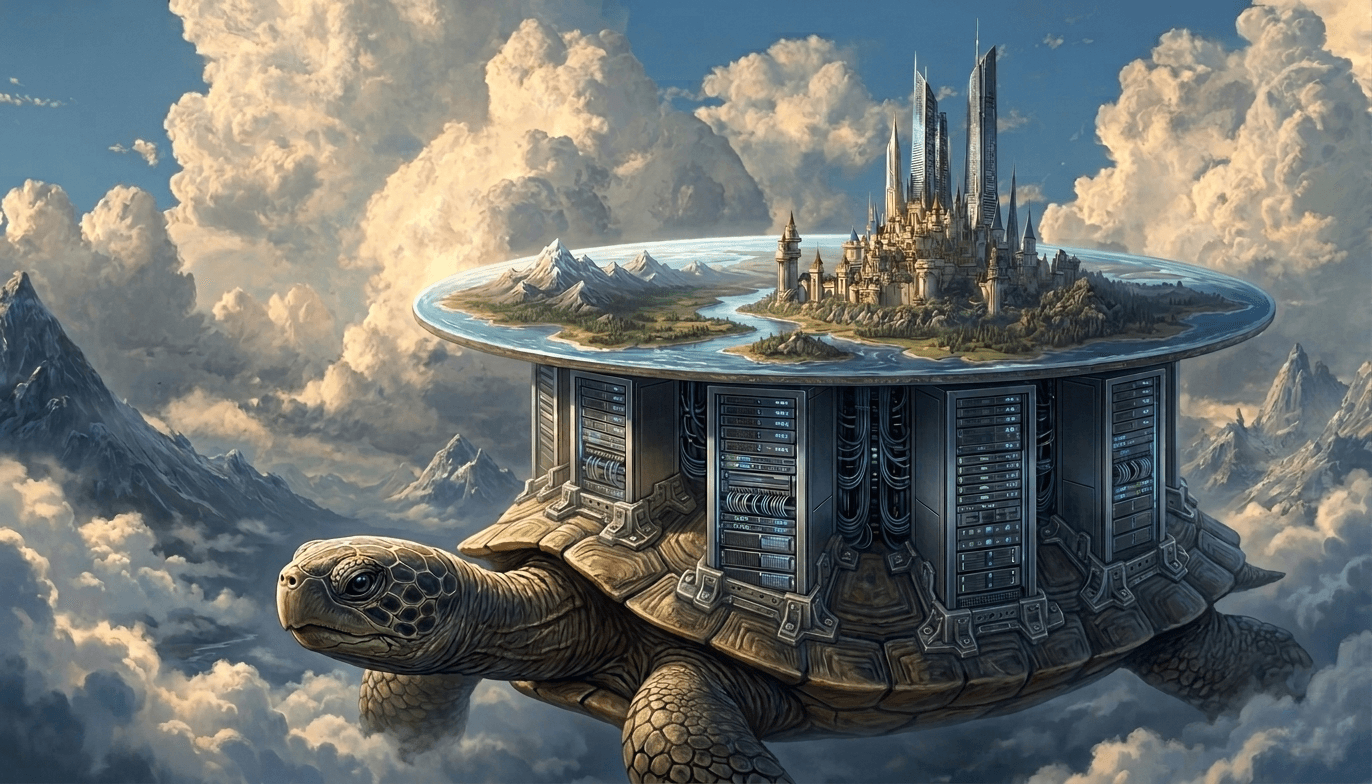

Solution Architecture

Gateway & Auth

The system authenticates and authorizes every request, enforces rate limiting per user and role, validates input data, and protects against prompt injection attacks.

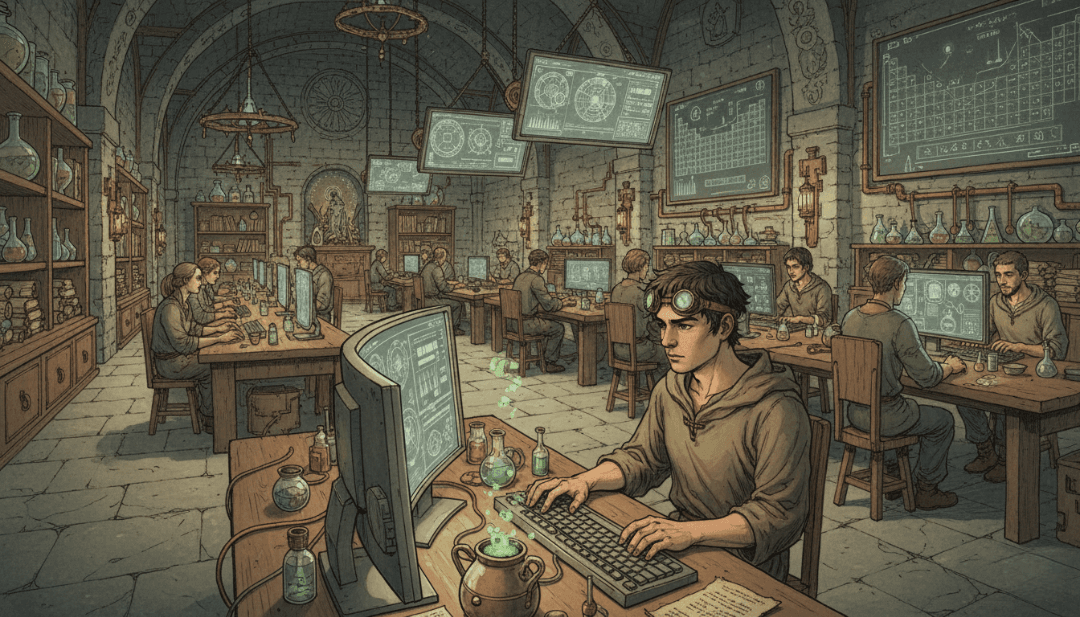

Query Processing

Before retrieval, the user's query goes through three optional transformations. Rewriting reformulates the query to better match the terminology of the knowledge base. This is especially important when users phrase questions in conversational language. Decomposition breaks a complex, multi-part question into several sub-queries, each processed independently with results merged afterward. HyDE (Hypothetical Document Embeddings) generates a hypothetical answer to the query and uses its embedding for retrieval – this improves recall in highly specialized corpora where the query and the document are phrased very differently.

Embedding Model

Converts query text into a vector representation for dense retrieval. The model is deployed locally (Nomic, BGE, or equivalent). In air-gapped environments, model weights are pre-loaded before network isolation. Embedding quality directly determines dense retrieval quality: switching models requires a full re-indexing of the corpus.

Hybrid Retrieval

Search runs in parallel across two indexes. Dense retrieval searches vector embeddings via an HNSW index in the vector database and it captures semantic similarity well. Sparse retrieval via BM25 searches by keywords. It is precise on terms, abbreviations, and numbers where semantic search returns irrelevant results.

RRF Fusion

Reciprocal Rank Fusion merges the results of dense and sparse retrieval into a single ranked list. Each document receives a final score based on its positions in both lists, without the need to normalize heterogeneous scores. This enables correct merging of results from two fundamentally different retrieval methods.

Reranker

A cross-encoder reranker jointly re-scores each query–chunk pair (unlike bi-encoder embeddings, which encode the query and document independently). This yields significantly more accurate ranking at the cost of additional compute. It is applied to the Top-K results after fusion, not to the entire corpus.

Guardrails

Before returning a response, the system checks it for hallucinations, toxicity, and policy compliance. If a check fails, the request is sent for re-generation.

Semantic Cache

Similar queries receive a response from cache without invoking the LLM. TTL policies manage data freshness and prevent stale responses from being served.

Prompt Registry

A centralized store of versioned prompt templates. It enables A/B testing, rollbacks, and separation of templates by task type. All without changing service code.

Streaming Response

The response is delivered to the user token by token as it is generated, without waiting for the full output to complete. This reduces perceived latency. An important constraint: guardrails in streaming mode cannot inspect the full response before delivery begins, bacause this requires either buffering or moving some checks to a post-processing layer.

Observability

Self-hosted Langfuse traces every request across all pipeline stages, from gateway to streaming response. RAGAS metrics (faithfulness, answer relevance, context recall) evaluate response quality automatically. The feedback loop collects explicit user ratings and links them to specific traces. In air-gapped environments, the entire observability stack is deployed inside the perimeter.

Sovereign AI & Air-Gapped Deployment

The language model, embedding model, and reranker are deployed on-premise. Container images and model weights are pre-loaded before network isolation. The vector database, cache, Prompt Registry, and observability stack are hosted inside the perimeter. Guardrails run locally with no calls to external moderation APIs.

Trade-offs: Classic vs Advanced RAG

Classic RAG starts quickly and is easy to maintain. It performs well on knowledge bases of up to 10–50K documents with homogeneous content and straightforward queries. Answer accuracy stays around 60–70%, latency is minimal, and the infrastructure requires no specialized expertise.

Advanced RAG addresses problems that Classic RAG cannot solve by design. Dense-only search handles exact terms and abbreviations poorly as sparse retrieval fills that gap. Without a reranker, Top-K results contain irrelevant chunks that degrade generation quality. Without guardrails, hallucinations reach the user. Without semantic cache, every repeated query consumes LLM budget.

The cost of these improvements is measured in latency and operational complexity. The full stack adds 300–700 ms to the base LLM call. The reranker contributes 100–300 ms, guardrails another 50–150 ms, and both require threshold tuning. With proper configuration of semantic cache and async reranking, P95 latency stays within 2–3 seconds, and the number of LLM calls drops by 60–70%. Hybrid retrieval improves accuracy by 15–25% over dense-only, but requires maintaining two synchronized indexes.

Sovereign AI adds operational overhead for managing a GPU cluster and manually updating model weights. Air-gapped deployment requires pre-loading all artifacts before network isolation and eliminates any external dependencies including moderation APIs and cloud-based observability. PII data in traces requires explicit masking when configuring self-hosted Langfuse. Air-gapped environments are particularly resource-sensitive: every component competes for the same CPUs and GPUs inside the perimeter.

Implementation recommendation. Start with Classic RAG. Run it in a dev environment, load real data, and identify the bottlenecks – where accuracy falls short, where latency is unacceptable, where users receive hallucinations. Only once a specific problem is confirmed should you add the corresponding Advanced RAG component. This is especially relevant for air-gapped environments, where resources are constrained and every additional service requires justification.

Integration with Existing Systems

Both Classic and Advanced RAG are deployed as an isolated module behind a dedicated AI Gateway. The rest of the system calls a single API and has no knowledge of which implementation is currently active. This provides several practical advantages.

Switching between implementations is done via blue/green deployment with no changes on the calling service side. Both variants can run simultaneously, with different request types routed to each – for example, Simple RAG for standard FAQ scenarios and Advanced RAG for complex analytical queries. The choice of implementation is made at the API Gateway level based on request header, user role, or query type.

Both variants are stateless services. State is held in external stores: the vector database, cache, and Prompt Registry. This makes horizontal scaling and switching between implementations a configuration-level operation, not a refactoring effort.

Failure Modes and Degradation

Advanced RAG is more complex than Classic RAG and has more potential failure points. It is important to define upfront how the system behaves when each component degrades.

Semantic Cache unavailable. All requests go directly to the LLM. Latency returns to baseline, and call costs grow proportionally with traffic. The system continues to function but loses the primary economic advantage of Advanced RAG.

Guardrails false positive cascade. Guardrails begin blocking valid responses, so the user receives a rejection where the answer was correct. The system enters a re-generation loop and latency spikes sharply. The fix is threshold tuning and a circuit breaker that disables guardrails when the error rate exceeds a defined threshold.

Reranker degrades or goes down. Search results are returned without reranking, in the order produced by retrieval. Top-K quality drops, but responses continue to be generated. The reranker is a good candidate for graceful degradation: when unavailable, simply skip the step.

Dense and sparse index desynchronization. Documents are indexed in one store but not the other. RRF Fusion operates on incomplete data and search quality silently degrades invisible to the user. Detectable only through monitoring of index sizes and RAGAS metrics.

General degradation principle. If Advanced RAG is too slow, unstable, or generating cascading errors – switch to Simple RAG. This is precisely why both variants are kept behind a single AI Gateway. I would say Simple RAG is not a last-resort fallback, it is more like a fully legitimate operating mode when the complex stack is under stress.

Resource Estimation

Providing specific recommendations for CPU, GPU, and memory is not meaningful as consumption depends too heavily on data volume, the choice of embedding model, request frequency, and the vector database in use. Actually, universal numbers do not exist.

Instead, use an iterative approach. Load 1% of your real data into the vector store, then measure latency, resource consumption, and Advanced RAG response quality. Repeat the test at 10%, then 50%, then 100%. At each step, record how system behavior changes. This produces a real scaling curve for your specific data and allows you to forecast production resource requirements before you get there.

Business Cases

🏦 Financial Services & Banking

Banks process sensitive customer data and are subject to regulatory requirements (GDPR, PCI DSS, Basel III). Sovereign AI eliminates data transfer to external LLM APIs. Guardrails enforce compliance policy adherence. Hybrid retrieval accurately locates regulatory documents by exact terms and codes.

🏥 Healthcare & MedTech

Medical data falls into a specially sensitive category under HIPAA and local data protection regulations. Hallucination detection is critical, because an incorrect response about dosage or diagnosis is not acceptable. Sparse retrieval accurately locates medical terms and ICD codes where semantic search returns irrelevant results. Air-gapped deployment is mandatory for clinical systems.

⚖️ Legal Tech

Legal systems require precise search across case law and regulatory documents. Prompt Registry stores versioned templates for different types of legal queries. The reranker surfaces relevant precedents above general documents. The feedback loop accumulates attorney ratings and incrementally improves ranking quality.

🏛️ Government & Defense

Government systems require complete isolation from public networks. Air-gapped deployment with pre-loaded model weights is the only acceptable option. Input Rails protect against prompt injection attacks. All components, including observability, operate inside the secured perimeter with no external dependencies.

🏢 Enterprise Knowledge Management

Enterprise knowledge bases contain tens of thousands of documents in varied formats. Semantic Cache reduces costs by 60–70% for recurring employee queries. Metadata enrichment enables filtering by department, date, or document type. Hybrid retrieval performs equally well on technical specifications and unstructured text.

🔬 R&D / Scientific Research

Research organizations work with proprietary scientific data and patents. Query decomposition breaks complex scientific questions into sub-queries and improves recall. HyDE generates hypothetical answers to improve retrieval in highly specialized corpora. Sovereign AI preserves competitive advantage – data never leaves for external providers.

The selection rule in one sentence: Advanced RAG justifies the investment in complexity if at least one condition holds – data is confidential, the knowledge base exceeds 50K documents, or answer accuracy is critical to the business or a regulator.